Nvidia DGX Station Launch Brings AI Supercomputing to the Desktop

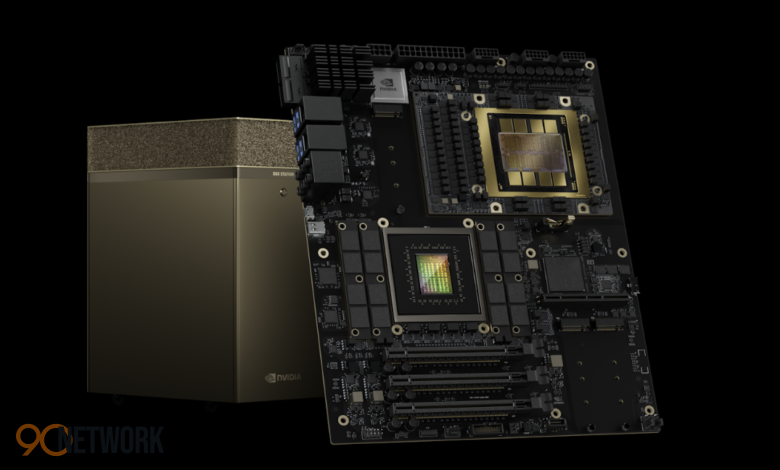

The Nvidia DGX Station has officially launched, introducing a new class of desktop AI systems powered by the advanced GB300 Grace Blackwell superchip. Designed for developers, researchers, and enterprises, the system brings data center-level performance directly to a workstation environment.

With AI models growing rapidly in size and complexity, this release highlights a major shift toward local, high-performance computing without relying entirely on cloud infrastructure.

A New Era of Desktop AI Computing

The Nvidia DGX Station represents a middle ground between compact AI systems and large-scale data center solutions. It is designed to provide significantly more power than entry-level AI desktops while remaining accessible as a workstation.

The system is now available to order through major hardware partners and is expected to begin shipping in the coming months.

This move reflects Nvidia’s strategy to expand AI computing beyond centralized infrastructure and into individual workspaces.

Powered by the GB300 Grace Blackwell Superchip

At the heart of the Nvidia DGX Station is the GB300 Grace Blackwell Ultra Desktop Superchip, combining a powerful CPU and GPU into a unified system.

Key hardware features include:

- 72-core Grace CPU

- Blackwell Ultra GPU

- High-speed NVLink-C2C interconnect

- Unified memory architecture

This design allows the CPU and GPU to share memory efficiently, eliminating bottlenecks common in traditional systems.

Massive Memory and Performance Capabilities

One of the standout features of the Nvidia DGX Station is its enormous memory capacity and processing power.

Key specifications:

- Up to 784GB total memory

- High-bandwidth LPDDR5X system memory

- HBM3e GPU memory with extremely high throughput

- Up to 20 petaflops of AI compute performance

This level of performance enables the system to handle extremely large AI models and datasets directly on a desktop machine.

Designed for Advanced AI Workloads

The Nvidia DGX Station is built specifically for demanding AI applications.

Primary use cases include:

- Training large language models

- Running AI inference locally

- Developing autonomous AI agents

- Data science and analytics

The system can even support models with up to one trillion parameters, allowing developers to work on cutting-edge AI projects without relying on external infrastructure.

Bridging the Gap Between Desktop and Data Center

Traditionally, high-end AI workloads required access to large data centers. The Nvidia DGX Station changes this by bringing similar capabilities to a deskside system.

Key advantages:

- Local data processing for improved privacy

- Reduced dependency on cloud services

- Faster iteration for developers

- Seamless scaling to data center environments

Applications developed on the system can be easily transferred to larger Nvidia platforms in the cloud or enterprise data centers.

Built for Developers, Researchers, and Enterprises

The Nvidia DGX Station targets professionals who need extreme computing power in a more accessible format.

Ideal users include:

- AI engineers and developers

- Data scientists

- Research institutions

- Enterprises working with sensitive data

Its ability to operate as both a personal workstation and a shared compute node makes it flexible for different environments.

Competition in the AI Hardware Market

The launch strengthens Nvidia’s position in the rapidly evolving AI hardware market.

Key competitive factors include:

- Performance per watt

- Memory capacity

- Local vs cloud computing capabilities

- Ecosystem integration

For more insights, explore latest AI hardware trends (internal link) or review detailed specifications on Nvidia official product pages (outbound reference).

As demand for AI infrastructure grows, competition among hardware providers is expected to intensify.

Why Local AI Computing Is Gaining Importance

The Nvidia DGX Station reflects a broader industry shift toward local AI processing.

Benefits of local AI systems:

- Enhanced data security

- Lower latency

- Greater control over workloads

- Reduced long-term cloud costs

This trend is particularly important for industries dealing with sensitive or regulated data.

Challenges and Considerations

Despite its impressive capabilities, there are some challenges to consider.

High Cost

Systems with this level of performance are expected to be expensive, making them accessible primarily to enterprises and professionals.

Power and Cooling Requirements

High-performance hardware requires advanced cooling and energy management, especially in workstation environments.

The Future of AI Workstations

The Nvidia DGX Station signals the future of AI computing, where powerful systems become more accessible outside of large data centers.

Future developments may include:

- More compact AI supercomputers

- Increased efficiency and lower power consumption

- Wider adoption across industries

- Integration with next-generation AI models

This evolution will continue to reshape how AI is developed and deployed.

What This Means for the Industry

For the tech industry, this launch represents a major step toward decentralizing AI infrastructure.

Key implications:

- Faster innovation cycles

- Increased accessibility for developers

- Greater competition in AI hardware

- Expansion of edge and local AI computing

The ability to run advanced models locally could transform workflows across multiple sectors.

Final Thoughts

The Nvidia DGX Station introduces a powerful new option for AI development, combining cutting-edge hardware with a workstation-friendly design. By bringing supercomputing capabilities to the desktop, Nvidia is helping redefine how and where AI work gets done.

As AI continues to evolve, systems like this will play a critical role in enabling the next generation of innovation.